In this project, Dr. Alex Hall and our team at the UCLA Center for Climate Science undertook a comprehensive investigation of future climate in California’s Sierra Nevada. The team used an innovative method they developed to produce fine-scale climate change projections. Unlike past studies, these projections take into account key physical processes that affect the rate of snow loss under warming. Using these projections, our team has answered key questions about the fate of the Sierra Nevada snowpack, a critical natural resource that not only supports an iconic ecosystem but also provides freshwater to millions of Californians.

Context

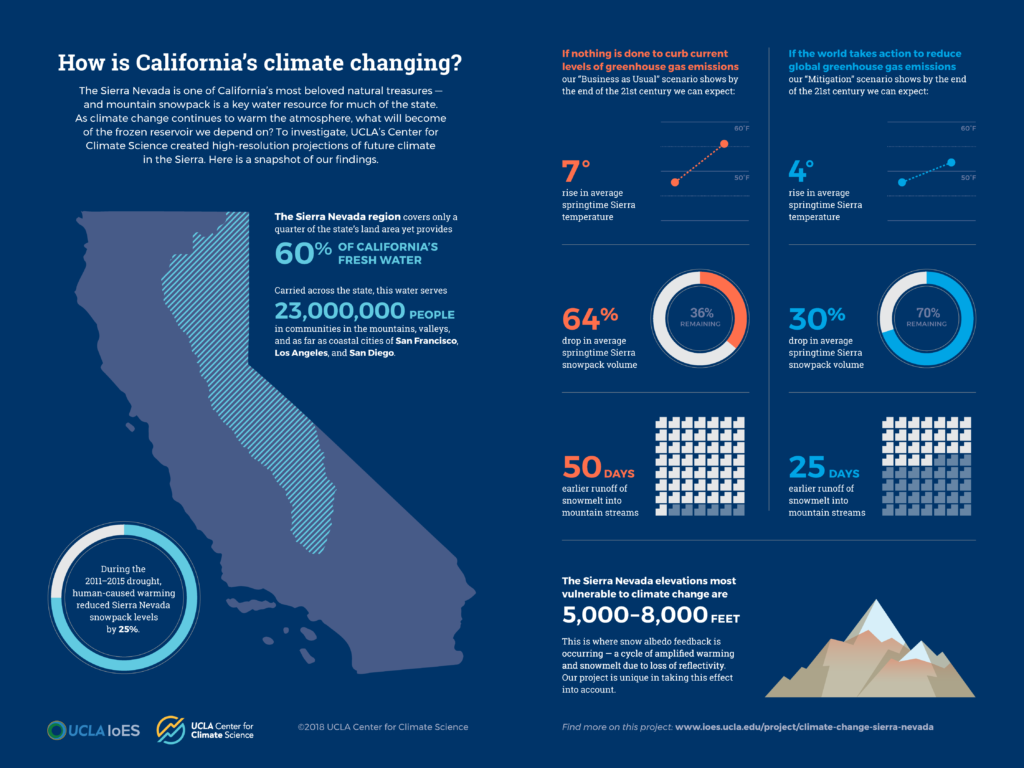

Sierra Nevada snowpack is an important water source for California. It acts as a natural reservoir that holds water in frozen form until it gradually melts over spring and summer, flowing into manmade reservoirs and conveyance systems. Past studies have shown that in the future, global warming is expected to shrink the Sierra snowpack. If California is to adapt to these changes, we need to know the specifics: How much warmer will it get? Just how much snow will we lose overall? How much earlier does snow melt and run off? Do soils dry out faster after snow has melted? Are all elevations and all watersheds affected to the same degree? If we act to reduce greenhouse gas emissions, can we prevent these changes?

The scientific challenge

Answering these questions requires the help of global climate models, powerful computing tools that simulate the climate system. Global climate models are the best tools we have for projecting future climate. But they are too low in spatial resolution to accurately simulate future climate in topographically complex areas like the Sierra Nevada, where different elevations experience different climatic conditions. There are several methods by which climate scientists can “downscale” global climate model information to create higher-resolution future climate projections. Some of these methods are dynamical, meaning they use a regional climate model, a high-resolution cousin of a global climate model, to simulate future climate. Dynamical downscaling is physically realistic but is very expensive, computationally speaking. Other downscaling methods are statistical, using mathematical shortcuts to produce higher-resolution projections. Statistical models are computationally cheap and quick to run, but they don’t necessarily represent the physical dynamics of local climate.

A physical climate phenomenon that’s especially important to represent when studying snow is called snow albedo feedback. Albedo is a measure of how much sunlight is reflected by a surface. Snow has a high albedo, meaning it reflects a lot more sunlight than it absorbs. Other land surfaces have lower albedos, meaning they absorb more sunlight than snow. Snow albedo feedback occurs when warming causes snowpack to shrink at its margins. The ground that is uncovered absorbs more sunlight than snow would have, which enhances the warming at that location. The enhanced warming melts more snow, which exposes more sunlight-absorbing ground, which further enhances warming, and so on. In other words, snow albedo feedback is a feedback loop that leads to greater local warming than would be expected from atmospheric warming alone. If a study doesn’t account for it, snow loss could be significantly underestimated.

The breakthrough

Our team has developed an innovative approach called hybrid downscaling, which combines the strengths of dynamical and statistical downscaling. First, our researchers run a limited number of dynamical model simulations that represent the full span of global climate model outcomes. Then, they build a statistical model that mimics the dynamical one. The result is a set of future climate projections that is both comprehensive and physically credible. The team first used this approach in a first-of-its kind study of climate change in the greater Los Angeles region. Now they are applying it to project future climate in the Sierra Nevada.

In this project, our team first created a simulation of the historical climate from 1981 to 2000 at a spatial resolution of 3 kilometers, or about 1.9 miles. Then they projected future climate at 2041–2060 and 2081–2100 at the same resolution. Future projections represent the full ensemble of latest-generation global climate model output, which underlies the most recent climate change assessment report by the Intergovernmental Panel on Climate Change (IPCC). The team created projections for different greenhouse gas scenarios used in the latest IPCC assessment. These include a scenario that roughly represents the present emissions-reductions goals of the recent Paris climate accord, which we call “mitigation,” and a scenario that represents “business as usual.”

Key findings

Our key findings are summarized in the graphic below (click on the image to enlarge and download). Our complete findings are available in our report Climate Change in the Sierra Nevada: California’s Water Future. See our graphics archive for more images like this.

More about the project

Studies on snowpack changes and the effects of warming during wet years are forthcoming. They will provide insight into climate change impacts not only on water resources but also on wildfire, ecosystems, and recreation.

In addition to publishing our findings in science journals, in 2018 we will publish a comprehensive report on the project. This report will synthesize our findings and explain their implications for policymakers and the public. Throughout 2017–2018, Dr. Hall will also give a series of public talks about the project in the Lake Tahoe area, Sacramento, San Francisco, and other locations. For more information, stay tuned to our Events page.

Primary funding for this work was provided by the Metabolic Studio in connection with the Annenberg Foundation. Additional support was provided by the National Science Foundation, the US Department of Energy, the Luskin Center for Innovation, and the Sustainable LA Grand Challenge.

Related Publications